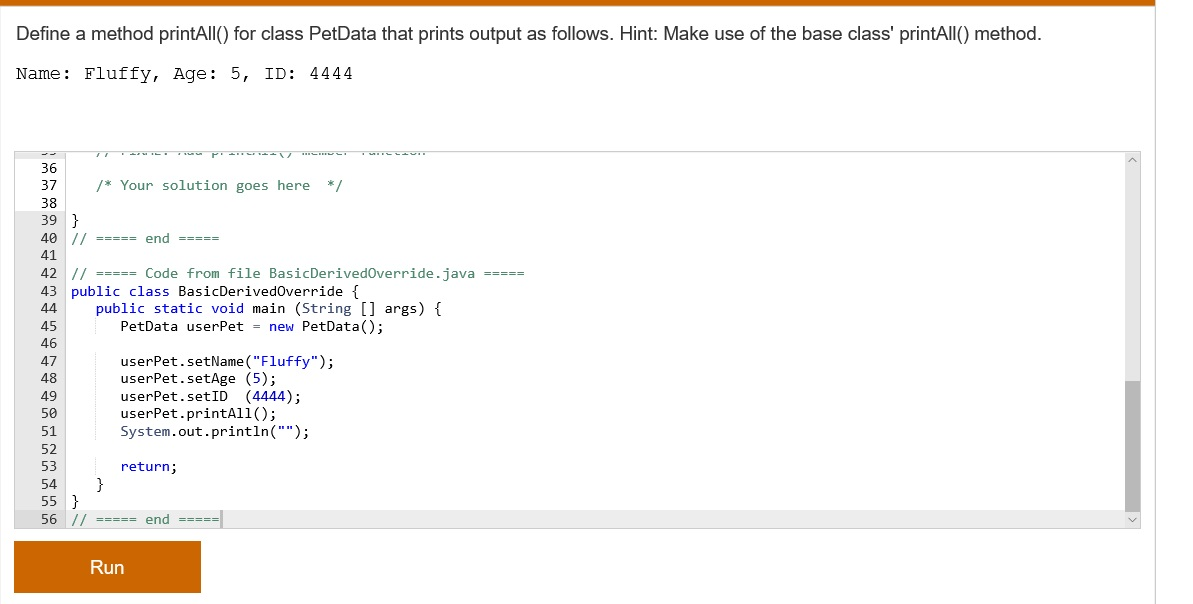

T-SNE is extensively used in visualizations of higher dimensional data, some of the areas where t-SNE is used are as follows:ġ. T-SNE tries to figure out how similar the points are and groups them based on their similarity and is therefore able to preserve the inner structure of the data.Ĭonsider the figure below, t-SNE figures out the distances between the points and groups the closer points to form clusters in lower dimensions as well.įig 6: t-SNE on MNSIT data | However, the distance between the clusters is not preserved after transformation.

The neighborhood, i.e, the cluster with points close to each other are preserved and this holds well even if there are multiple clusters. The algorithm figures out the points that are close to each other and the ones that are farther away. T-SNE tries to preserve the neighborhood of the points even when they are being transformed from higher dimensions to lower dimensions. t-SNE overcomes this during visualizations by preserving the inner structure of the data.

While Principal Component Analysis tries to find the dimensions with the maximum amount of variance by linear projection of the points, PCA sometimes fails to preserve the inner structure of the data in non-linear scenarios like sinusoidal and cylindrical relationships. Being one of the most talked about dimensionality reduction algorithms in the recent years, especially for visualizations, I thought I’d take some time to help others develop an intuition on what t-SNE is actually doing.ĭeveloped in 2008 by Laurens van der Maatens and Geoffrey Hinton, t-Distributed Stochastic Neighbor Embedding unlike Principal Component Analysis (PCA) is a non-linear method used for visualizing higher dimensional data in human interpretable dimensions like 2D or 3D.